Why Significance Test Results Change When Columns Are Added Or Removed

When columns (or rows) are added or remove to a table, the results flagged as being significant may change. There are two non-obvious scenarios this will occur:

- By default, Q applies the False Discovery Rate Correction on tables. This is a type of multiple comparison procedure. A consequence of this correction, and all multiple comparison corrections in general, is that that when data is added or removed, results that were previously marked as significant can cease to be significant and vice versa.

- Where inappropriate statistical testing assumptions are being used.

Scenario 1: The change is due to multiple comparison corrections

Example

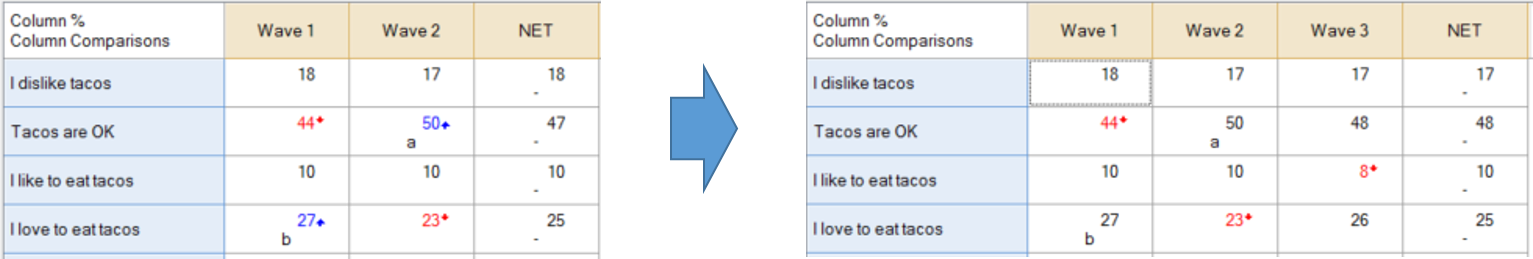

In the example below, after two waves were collected, two of the comparisons are shown as being significant, but, when a third wave of data was included, all of a sudden, results previously marked as being significant were no longer marked as being significant.

Explanation

Consider the Tacos are OK row. When we only have two waves of data, the difference between 44% and 50% is marked as being significant. There are two possible explanations for this result. Explanation 1 is that the research has revealed that the proportion of people in the market who believe Tacos are OK has increased. Explanation 2 is that it is just a fluke result (e.g., due to sampling error).

Tests of statistical significance are designed to give some insight into which of these explanations is correct. Based on the information on the table on the left, the educated guess provided by the statistical test is that the difference between 50% and 44% reflects a difference in the market (i.e., Explanation 1).

Now consider the implication of these conclusions on Wave 3. If Explanation 1 is true, we might expect that the increase would continue in Wave 3 and would result in a higher again result (i.e., higher than the 50% from Wave 2). If Explanation 2 is correct, we would expect that the result in Wave 3 would be somewhere between Wave 1 and Wave 2 (i.e., if the difference between Waves 1 and 2 is a fluke, our best guess for Wave 3 is that it would be in-between the results of the first two waves). As the result for Wave 3 is 48%, which is in-between the results form Wave 1 and 2, the data from Wave 3 tends to support Explanation 2 (i.e., that the difference between Wave 1 and Wave 2 is not statistically significant). And, this is the reason why the results are no longer significant in the updated table (i.e., the additional data allows us to make a more informed conclusion about the differences between Wave 1 and 2).

It is important to note that while in this example, the absence of a trend is what changes the conclusions, multiple comparison corrections do not explicitly test for trends. Rather, they evaluate the extent to which the number of and magnitude of differences on a table is consistent with the idea that the differences are due to sampling error. For example, in the specific analysis described above, if the Wave 2 result had been, say, 55%, then this difference would have remained significant irrespective of the results of Wave 3 (i.e., the difference would be so large, that additional information would be irrelevant).

Modifying the multiple comparison settings

In situations where consistency is more important than rigor, it can be useful to turn off multiple comparison corrections. This is done in Statistical Assumptions.

Scenario 2: Inappropriate testing assumptions

The example below shows the same data as above, but with the multiple comparison corrections set to None. Note that the the column letters are consistent, and will always be consistent with such testing assumptions. However the colors and arrows remain inconsistent, with Wave 2 still not showing as significant. This is because, by default, the colors and arrows test against the complement. In the first table, the Wave 2 result is being compared against Wave 1, whereas in the second table, Wave 2 is being compared against the combined data from both waves 1 and 3, and it is not significantly different. See How To Test Between Adjacent Time Periods for an explanation of how to instead test Wave 2 vs Wave 1 and Wave 3 vs Wave 2.